Advertisement

If you've ever worked in a company that uses even the slightest bit of automation, there's a good chance you've brushed up against something called Shadow AI—whether you realized it or not. It’s not a mysterious tool lurking in a server room somewhere. Rather, it’s the growing wave of artificial intelligence systems deployed within organizations without formal oversight or approval. Think of it as the unofficial side of enterprise AI—intelligent tools adopted by individual teams or employees that don't pass through IT or compliance channels. And according to a recent study, this isn’t just a side issue anymore—it’s a central one.

What's remarkable is how quickly Shadow AI gains traction. Workers, keen to accelerate work, increase productivity, or try out an idea, often resort to generative AI tools or automation software without involving the firm's IT department. And who can really object? When entry requires no more than signing up or typing in a prompt, people will dive in, particularly when they're under deadlines to meet.".

But the very accessibility that makes these tools appealing also creates a problem. According to the research highlighted by AI Business, around 55% of organizations say their staff are already using AI tools that haven’t been approved by their IT teams. That’s more than half. And once these tools become part of someone’s workflow, they’re hard to remove.

The issue isn't just about control—it’s about risk. Shadow AI exposes companies to data breaches, compliance issues, and unintended biases in decision-making processes. The bigger the company, the more fragmented the usage, and the harder it becomes to get a grip on what's being used and where.

When employees bring in AI tools without approval, they aren’t usually thinking about governance. They’re trying to solve a problem. But what’s often overlooked is where the data goes once it enters that tool. If a generative model is trained on internal documents, emails, or customer records, that information could end up feeding into public models. It might not even be encrypted.

This isn't just theoretical. Several organizations in the past year have already had to scramble after discovering sensitive information was accidentally exposed through an unvetted AI assistant. It's not always the result of ill intent—often, it's someone just trying to speed up a task.

But the consequences can be serious. One misplaced file, one wrong prompt, and suddenly you're looking at potential regulatory violations or reputational damage. And since Shadow AI often slips under the radar, these issues don’t always get caught in time.

One of the more surprising details in the study is that a staggering 75% of IT professionals now say they’re either concerned or very concerned about the unchecked rise of AI tools. It’s not just the data risk—it’s also the fragmentation of systems and the duplication of efforts. When five different departments use five different AI tools to solve the same problem, you end up with a tangled mess that’s impossible to manage.

Even worse, these tools don’t always play well together. Integrating them into larger systems becomes a nightmare, and the lack of documentation makes auditing difficult. What began as a shortcut ends up turning into a long detour—one that often leads to rework or regulatory headaches.

The trouble is, banning these tools doesn’t work. Employees will find ways around restrictions if the tools help them do their jobs better. So, IT teams are caught in the middle: trying to enforce standards while not stifling innovation. The real challenge is finding a balance.

This isn't the kind of problem that goes away on its own. But it can be managed with the right steps.

Start by listening. Instead of hunting for violations, open a line of communication with teams and individuals. Ask what AI tools they’re using and why. You’ll likely find a mix of productivity apps, transcription tools, and more advanced platforms. The point isn’t to shut them down—it’s to understand the landscape.

Not all AI tools are equally risky. A grammar-checking app is different from a tool that interprets customer data. Once you have a list, assign each one a risk level based on the type of data it handles and how it integrates with other systems.

Make it easy for teams to get the green light. A complicated, drawn-out approval process will only push users back underground. Instead, build a framework where tools can be reviewed quickly, and if they meet basic criteria (data handling, security, vendor transparency), they can be added to a whitelist.

Telling people “don’t use that” only works if you give them something better. Where possible, offer pre-vetted AI tools that meet compliance standards but are still easy to use. The goal is to direct traffic, not block it.

Awareness changes behavior. If people know the risks, they’re less likely to take unnecessary chances. Short sessions that show real-world examples of data leaks or misuse can go a long way. This isn’t about fear—it’s about making smart choices.

Shadow AI didn’t become a problem overnight, and it’s not going to disappear with a single policy. But ignoring it—or hoping it’ll work itself out—is no longer an option. The more accessible AI tools become, the more likely it is that employees will use them without thinking twice.

The best response isn’t punishment, it’s partnership. Give people the tools they need, set clear boundaries, and stay open to new ideas. Shadow AI only grows in the dark. The moment you bring it into the light, you have a chance to steer it in the right direction.

Advertisement

How to convert transformers to ONNX with Hugging Face Optimum to speed up inference, reduce memory usage, and make your models easier to deploy across platforms

Looking for practical data science tools? Explore ten standout GitHub repositories—from algorithms and frameworks to real-world projects—that help you build, learn, and grow faster in ML

Confused about MLOps? Learn how MLflow makes machine learning deployment, versioning, and collaboration easier with real-world workflows for tracking, packaging, and serving models

A detailed look at training CodeParrot from scratch, including dataset selection, model architecture, and its role as a Python-focused code generation model

How Margaret Mitchell, one of the most respected machine learning experts, is transforming the field with her commitment to ethical AI and human-centered innovation

How Stable Diffusion in JAX improves speed, scalability, and reproducibility. Learn how it compares to PyTorch and why Flax diffusion models are gaining traction

Should credit risk models focus on pure accuracy or human clarity? Explore why Explainable AI is vital in financial decisions, balancing trust, regulation, and performance in credit modeling

Explore how Google Cloud Platform (GCP) powers scalable, efficient, and secure applications in 2025. Learn why developers choose GCP for data analytics, app development, and cloud infrastructure

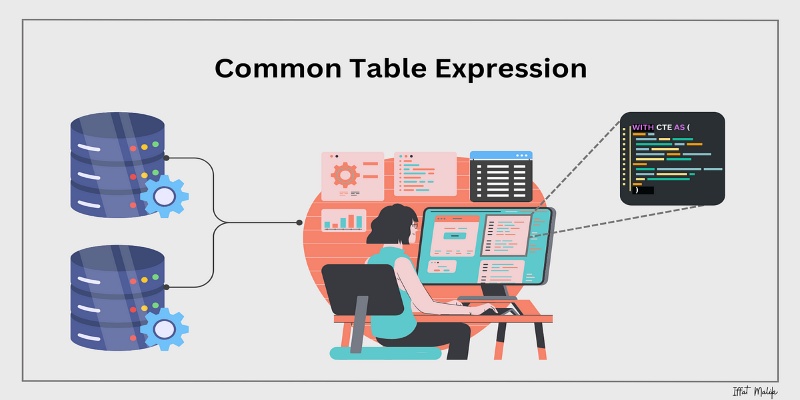

Learn what a Common Table Expression (CTE) is, why it improves SQL query readability and reusability, and how to use it effectively—including recursive CTEs for hierarchical data

How do we keep digital research accessible and citable over time? Learn how assigning DOIs to datasets and models supports transparency, reproducibility, and proper credit in modern research

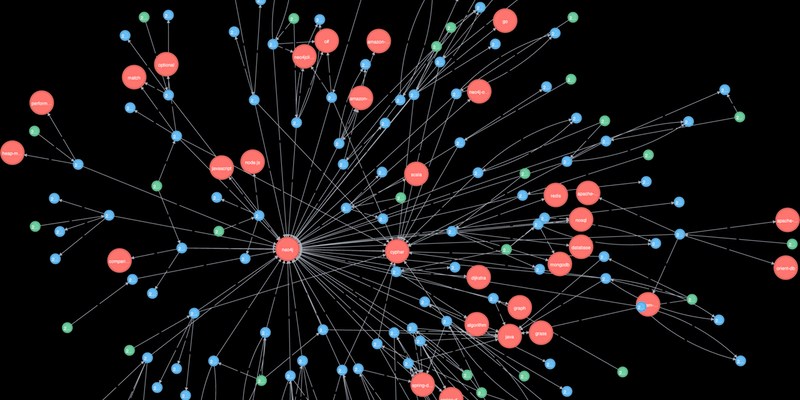

Explore how Neo4j uses graph structures to efficiently model relationships in social networks, fraud detection, recommendation systems, and IT operations—plus a practical setup guide

How Hugging Face for Education makes AI accessible through user-friendly machine learning models, helping students and teachers explore natural language processing in AI education